My backup setup

I read this tweet yesterday and thought it was interesting how everything could be lost? Who would put every egg in a single basket, and then I read the comments. Some asked me for my backup setup, and I was even requested to do a blog post about my setup, so here is the blog post.

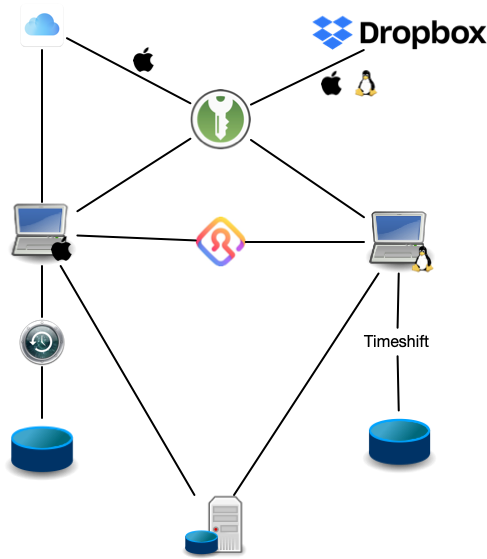

First of my systems are split into two, macOS and Linux machines, this “plays” into part of my setup.

Local Backup

For both macOS and Linux machines, I have full systems backups locally on external hard drives, where each device has a 4TB hard drive.

For the backup up itself, I wanted something that did it automatically, and so I didn’t have to care about it.

On macOS, the obvious solution to this is Timemachine which I have been using since it was introduced in Mac OS X Leopard, and it has saved my buttocks a few times.

Timemachine takes a system “snapshot”, only the first copy is a full snapshot, after that it only copies changes, which is good as it saves storage.

With Linux, I was for a long time looking for something similar but could find a suitable solution and therefore used cron job and dd to take a disk snapshot and then used gzip to compress it and store it on an external disk. That is pretty cumbersome and annoying.

But last year I found the tool Timeshift (github) which is an “alternative” to Timemachine, but for Linux, it does close to the same job and is easy to use.

The benefit of both Timemachine and Timeshift (and cron jobs) is configured to take a system backup every hour.

Meaning that I have a continuous backup of my systems, with the newest data.

Another benefit of both Timemachine and Timeshift if that you can use the backups to install new machines, I have used this feature a couple of times, and it takes the hassle away of remembering every tool you have installed.

Remote Backup

In addition to my Timemachine and Timeshift backups, I also have a remote server setup which I backup to once a week. I have set up calendar events to remind me to do it because I do not have this running on a schedule as I have with the local backups. Read: I still haven’t found a good way to do it on schedule.

On Linux here I still use dd and gzip on mac it is a bit more cumbersome, and I do not take a full system snapshot, I take a copy of my user folder and gzip it.

In both, I encrypt the data after compression, just for safety.

Cloud Back up

In addition to this, I also have a cloud backup of my pictures documents and other stuff. On macOS I use iCloud for this, it is convenient to select what folders I want to sync (to be honest, I just sync most of my user folder).

On Linux, it is a bit more annoying, because I have found a useful tool for it to work like iCloud.

What I have done is to set up Dropbox on my Linux machine and then a cron job runs once every 5 hours (my devices are almost always on) and use rsync to copy the content of the home folder to Dropbox.

I know that there are tools that try to mimic iCloud sync, but then once I have tried, failed on me.

Password

The keys to the castle or just passwords, I have locally in two locations on macOS and one on Linux, and then three times and twice in the cloud. Locally on both, I use KeePassXC as a password manager and have local password database, on both Mac and Linux I sync this file to Dropbox, first cloud backup. On macOS, I also use the build Keychain Access app, which keeps both a local copy and sync to iCloud (second cloud backup). Finally, on both macOS and Linux I use Firefox Lockwise for password and accounts as well.

Yes, I do replicate my accounts across all three password managers, because I have tried that 1Password has failed on me previously, and that is not something I want to try again.

Setup overview

To visualise my setup I have made a small “diagram” of the setup:

Future plans

Though I am pretty happy with my backup system at the moment, there is room for improvement. One of the things I want to add is one maybe two local NAS, running FreeNAS which will work with Timemachine and Timeshift and another “remote” backup as well. I want to run this using RAID, preferably 5 or 6.

Testing backups

A backup is not worth anything if you do not know if it works. Therefore you need to test them. For macOS I have an older mac laying around I use for testing my mac backups. For Linux, I spawn a virtual machine a create it using the backup, and from time to time I will install on an older device to check if I can make a full system restore on physical hardware as well.

Closing remarks

People may see my backup systems as overkill, but as someone who works on researching storage systems and have worked on cloud storage system my self, I know that your backups backup should have a backup and that is why my system is so complicated.

My main issue with my overall setup is energy consumption. I would like to find a way to reduce that significantly. One thing I could do is to switch from hard drives to solid-state drives, but that will not cause a significant enough reduction. Additionally, I am also looking at finding lower power consuming part for the FreeNAS based NAS I plan to build.